Automating Doctor Recruitment in Healthcare Facilities with AI

> The presented article describes a tool that is part of the healthcare platform we have developed.

Read →Long-form writing from the people who ship the systems — not marketing. New essays go out roughly weekly.

> The presented article describes a tool that is part of the healthcare platform we have developed.

Read →

Generative Adversarial Networks (GANs) that appeared in 2014 made it possible not only to perform image style transfer but also to generate text to speech with any voice.

Read →Everyone interested in computer vision applications has faced an object tracking problem at least once in their life. In this article, we will consider OpenCV object tracking methods and the algorithms behind them to help you choose the best solution in your workflow.

Read →

In this article, we discuss topics such as adapting transformer architecture from NLP for image processing. We will explore novel vision transformers architectures and their application to сomputer vision problems: object detection, semantic segmentation, depth prediction.

Read →

Computer vision in healthcare is revolutionizing patient care with applications in disease diagnosis, surgery, and drug discovery. Despite challenges like data privacy and regulations, its market is set for rapid growth, driven by tech advancements and the demand for precision me

Read →

Deep learning is used in various fields of knowledge, such as medicine, computer vision systems, production automation systems, etc.

Read →

Each data scientist conducts many different experiments in order to find the best set of hyperparameters. In this article, we will consider the platform, which allows you to efficiently manage and log your experiments - Weights & Biases.

Read →

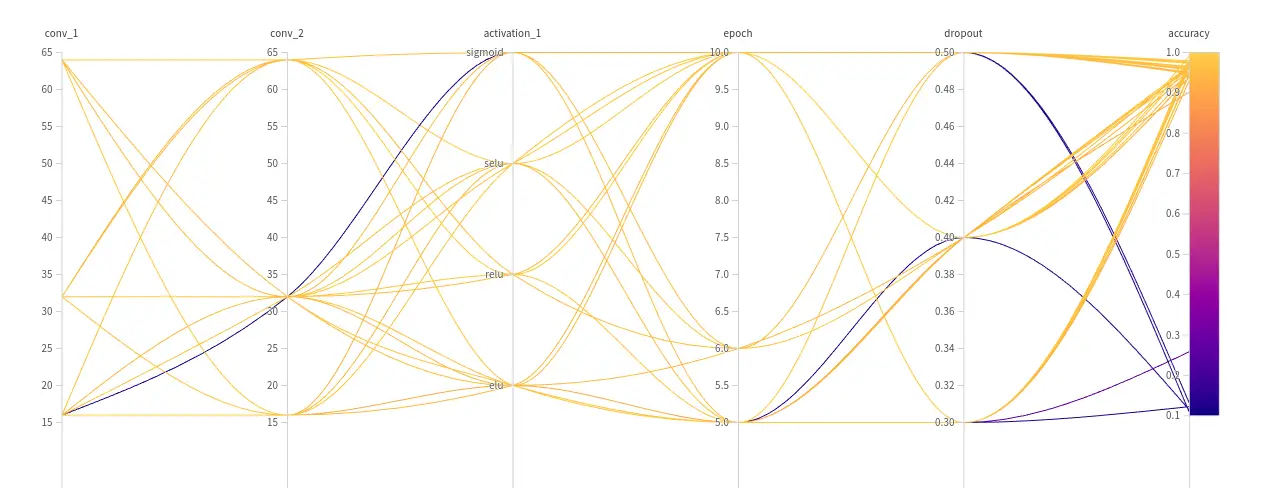

Hyperparameters optimization is an essential part of machine learning and deep learning projects. Manually selecting the best hyperparameters is not easy. This is the case especially for complex models such as neural networks.

Read →

Data science has been an essential component of the finance sector for a while now. The finance industry provides the necessary ingredient for deep learning - big data.

Read →